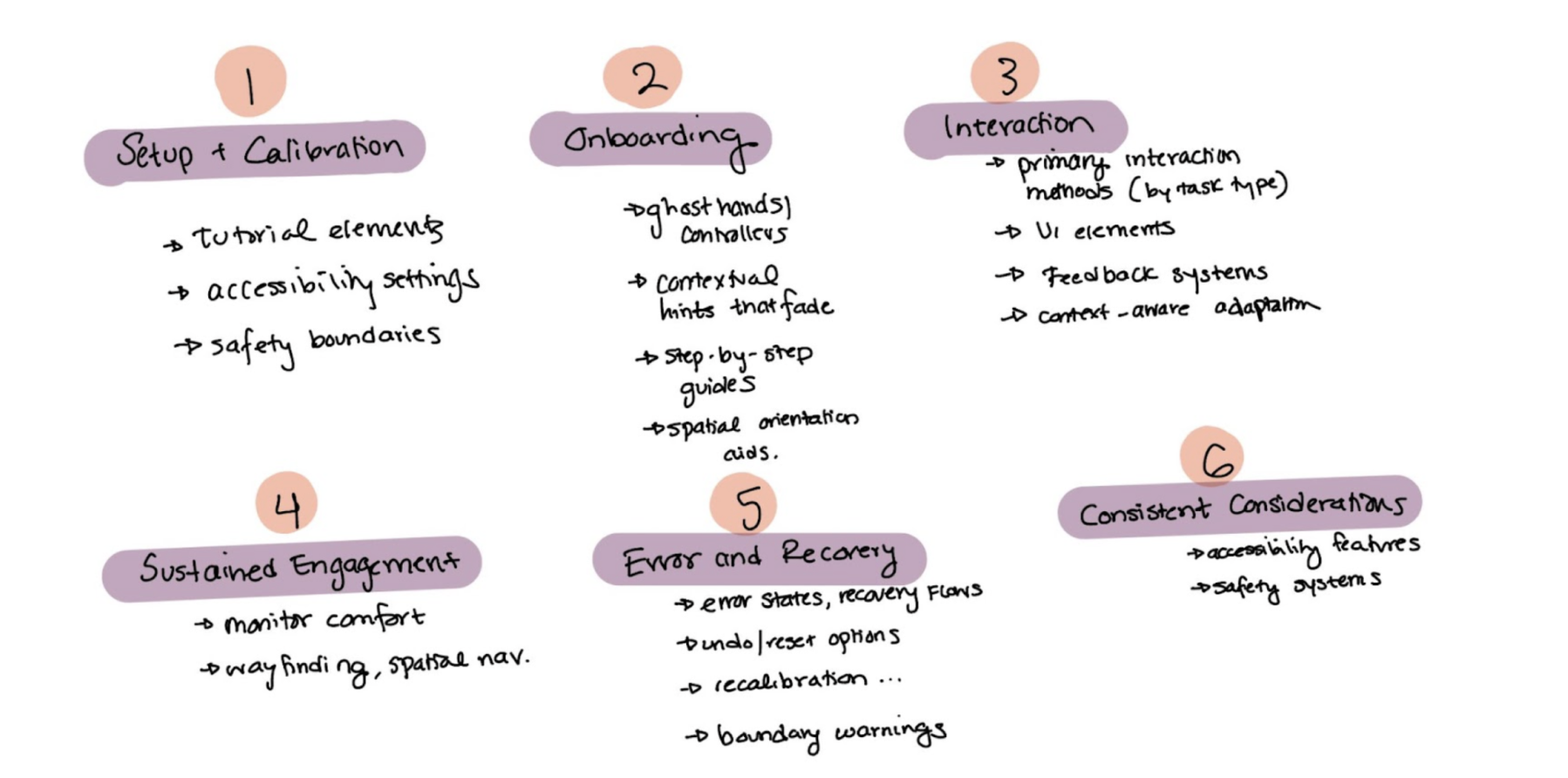

Before talking to anyone, I dug into academic papers and design articles to understand the current landscape. The paper Virtual Reality User Interface Design: Best Practices and Implementation (Mehmedova et al.) explicitly states there's a lack of unified design guidelines for XR and proposes categories like general VR principles, ergonomic design, onboarding, and cybersickness prevention.

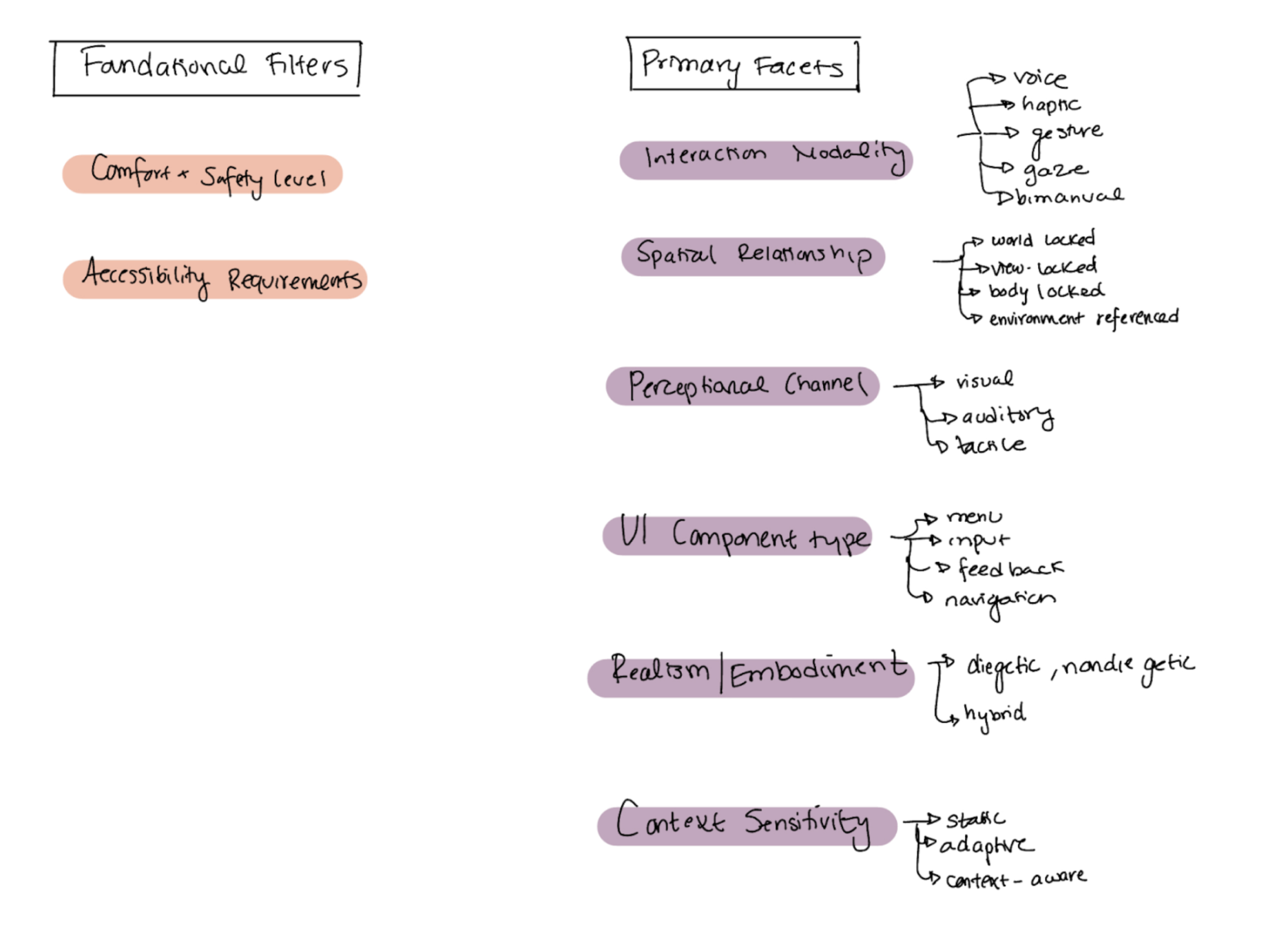

Davari's Towards Context-Aware Adaptation in Extended Reality offered a different lens, organizing XR interfaces by how they adapt to context through content design, presentation design, and input design, helping me see XR elements as interconnected systems rather than isolated components. Finally, Sahu's practitioner-focused article "Designing for the Immersive World: A UX Designer's Guide to AR, VR, and XR" provided a more approachable framework organized around devices, core UX principles (accessibility, comfort, spatial information, natural interactions), and use cases.

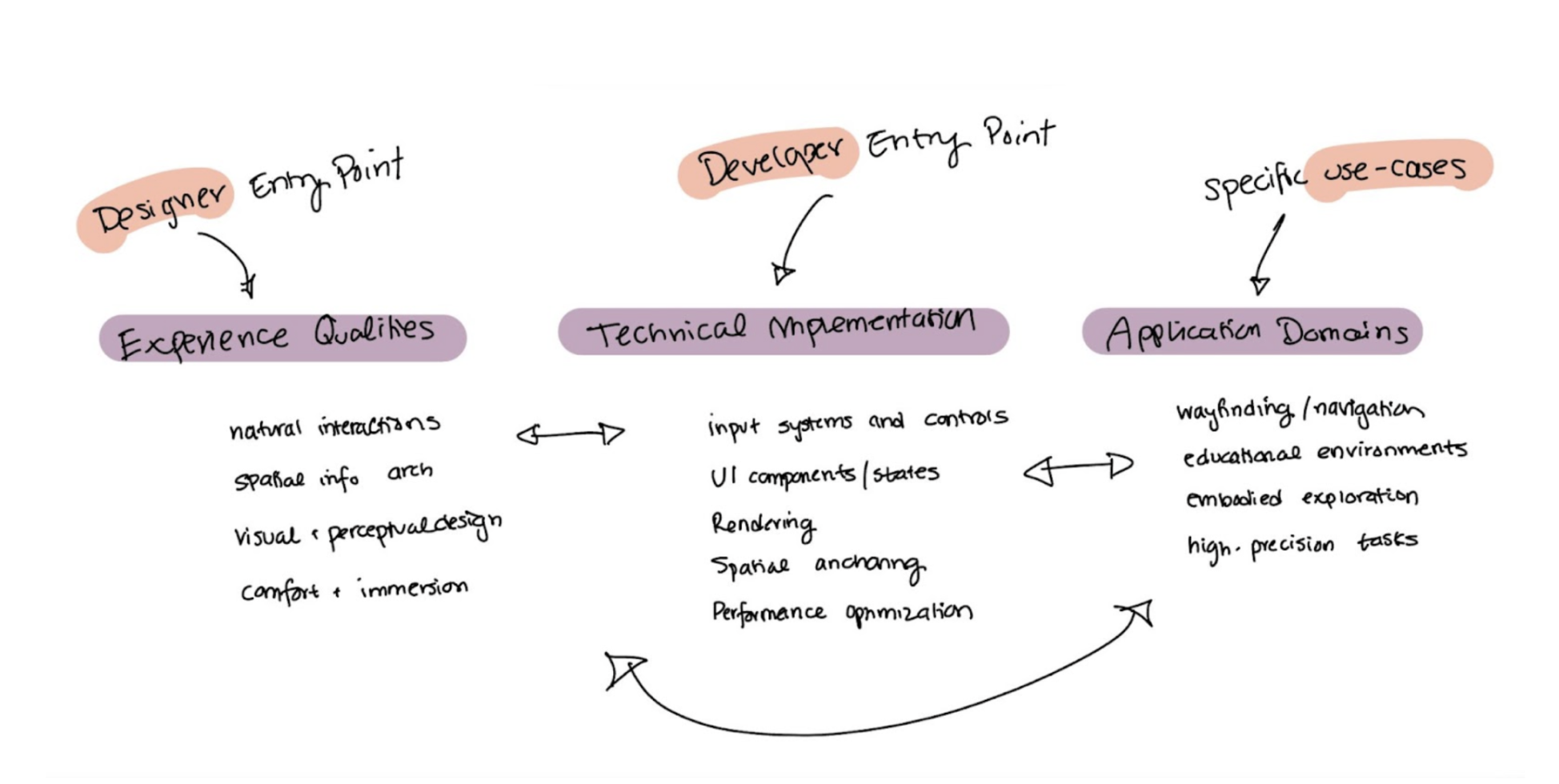

These gave me some critical insights: there's no standard way to organize XR knowledge, different frameworks serve different purposes, and the best approaches acknowledge that XR design is contextual and interconnected. But the literature didn't tell me how actual designers and developers think about organizing this information when they're doing their work? That's where user research came in.

I conducted 6 in-depth interviews, 3 designers and 3 developers from Carnegie Mellon and UC Berkeley who have experience working on XR projects. I wanted to understand how they conceptualize interface components, what resources they use, and how they make design decisions.

I had participants organize 105 XR design elements into categories that made sense to them. I gave them cards with terms like "gaze control," "radial menus," "boundary feedback," "motion sickness prevention", all the nitty-gritty stuff that goes into designing XR experiences.

The messiness was actually the most critical insight. XR elements don't fit into neat boxes because the decisions are contextual, embodied, and interdependent.